The explosion of highly capable, localized open-weight models like Llama 3 8B and Mistral has fueled a massive surge in home-brew model training. However, executing a "Full Fine-Tuning" operation on these models—even the relatively compact 8-Billion parameter ones—requires an immense amount of VRAM. Entering QLoRA (Quantized Low-Rank Adaptation): the definitive mathematical technique that compresses enterprise training operations down to fit on consumer hardware.

In this efficiency guide, we will aggressively demystify the QLoRA framework. We will break down exactly how little VRAM you need, map out the precise hyperparameter settings to keep your GPU from crashing, and outline exactly which hardware—like the RTX 5070 Ti—serves as the sweet spot for these localized training runs.

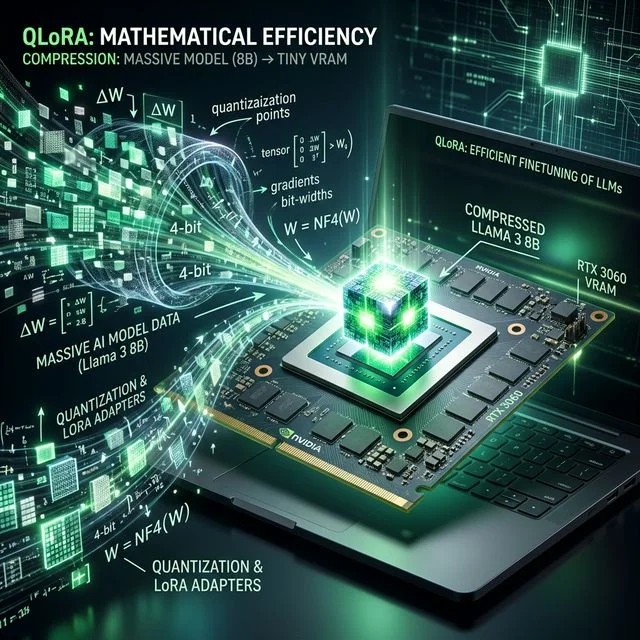

The Magic of 4-bit NormalFloat (NF4)

Before QLoRA, traditional LoRA (Low-Rank Adaptation) techniques froze the base model weights in their standard 16-bit format, meaning a user still needed to load the entire gigantic FP16 model into VRAM alongside the training adapters.

QLoRA revolutionizes this by introducing the 4-bit NormalFloat (NF4) data type. It aggressively compresses the massive base model from 16-bit precision down to 4-bit representation, while mathematically guaranteeing that the "information density" remains nearly perfectly identical.

Because the base model is now frozen in 4-bit, its VRAM footprint shrinks by ~75%. You then attach tiny, highly-efficient 16-bit LoRA adapter weights on top of the quantized model. The frozen 4-bit base model passes information to the 16-bit adapters, which act as dynamic sponges, absorbing only the "new learning" from your training dataset.

The Minimum Hardware Profile

The beauty of QLoRA is that it democratizes fine-tuning. But it still obeys the strict rules of VRAM scarcity.

VRAM Footprint of Llama 3 8B QLoRA Training:

- Frozen 4-bit Base Model: ~5.5 GB

- LoRA Adapters (Rank 16): ~200 MB

- Training Gradients & Optimizer States (AdamW): ~2 GB to 4 GB

- Context Window Activations (Varies heavily by sequence length): ~3 GB to 6 GB

The Minimum Viable Rig: To reliably train an 8B model via QLoRA without hitting Out of Memory (OOM) walls, you need 12GB of VRAM. This makes the highly affordable older RTX 3060 12GB an excellent, low-budget entry point.

The Ideal Enthusiast Rig: However, if you want to push longer sequence lengths (teaching the model to write code or read entire documents contextually), you run out of 12GB quickly. We strongly recommend a 16GB VRAM foundation, such as the newly released NVIDIA RTX 5070 Ti or even the budget-friendly AMD RX 9070. 16GB provides significant overhead for deeper training and larger datasets.

Hyperparameters: The VRAM Killers

When executing a QLoRA script, you have the ability to toggle hyperparameter flags. A single typo in these settings will instantly push your VRAM consumption from 9GB to 20GB, aggressively crashing your runtime.

1. Sequence Length (max_seq_length)

This determines the length of "memory" your model has access to during training. If you train on short tweets, a sequence length of 512 tokens is sufficient, consuming very little VRAM. If you are training a Llama 3 coding assistant, you might need a sequence length of 4096 or 8192 tokens. VRAM Impact: Massive scaling. High sequence lengths geometrically explode your activation VRAM buffers. If you get an OOM error, halving this number is the quickest fix.

2. Batch Size and Gradient Accumulation

per_device_train_batch_size dictates how many examples from your dataset are passed through the model simultaneously per training step.

VRAM Impact: High. A batch size of 1 is the safest.

To mimic the effect of a larger batch size (which stabilizes learning curves) without blowing up your VRAM, you should use gradient_accumulation_steps. Set Batch Size to 1 or 2, and set Gradient Accumulation to 4 or 8. This aggregates the math off-cycle, yielding a stable training loop across heavily constrained GPUs.

3. Gradient Checkpointing

Always turn this on.

By enabling gradient_checkpointing=True inside your Hugging Face or Unsloth trainer scripts, the framework trades a slight decrease in processing speed (~10-20% slower) for massive VRAM savings by deliberately "forgetting" specific calculation states and recalculating them on the fly. This single line of code regularly saves 2GB to 4GB of VRAM during 8B training runs.

Accelerating the Process: Unsloth and Flash Attention

Even with QLoRA, training an 8B model can take several hours on consumer graphic cards.

To maximize your workflow efficiency, ensure you are utilizing optimized frameworks. The popular Unsloth 2x Speedup wrapper radically accelerates standard Hugging Face pipelines, slicing your total runtime nearly in half while automatically optimizing VRAM off-cycles. If you are training on an NVIDIA RTX 50-series card, natively enabling Flash Attention 2 within your scripts ensures you fully leverage the card's native architecture, pushing the hardware to its computational absolute limits.

If your local environment is acting sluggish and constantly tripping memory limits, take the time to run your target model through our Will It Run? VRAM utility before hitting execute. QLoRA is the closest thing to magic in localized AI—respect the hardware bounds, and it will effortlessly enable the creation of personalized, hyper-focused local reasoning models.

About the Author: Justin Murray

AI Computer Guide Founder, has over a decade of AI and computer hardware experience. From leading the cryptocurrency mining hardware rush to repairing personal and commercial computer hardware, Justin has always had a passion for sharing knowledge and the cutting edge.