AI Hardware Articles

Deep dives, analysis, and setup guides on building the right hardware for modern local AI and agent workflows.

April 2026

New & Free: Microsoft VibeVoice Software Guide – The Future of Frontier Voice AI

A comprehensive guide to Microsoft’s new 7.5 Hz speech model. Learn how it handles high-fidelity voice cloning, real-time streaming, and the VRAM needed to run it locally.

April 2026

Everything You Need to Know About Hermes AI Agent

The definitive guide to NousResearch's Hermes Agent. Discover its 3-layer memory system, 40+ built-in tools, and how it compares to OpenClaw.

March 2026

TurboQuant: Redefining AI Efficiency with Extreme Compression

A deep dive into Google's TurboQuant algorithm. Learn how it achieves 3-bit KV cache compression without sacrificing AI model accuracy or speed.

April 2026

Top 100 Local AI Models For Privacy + Best Outputs (2026)

The complete 2026 guide to 100 local AI models — from frontier LLMs and coding agents to image gen, video, voice, music, and embeddings. VRAM requirements, benchmarks, and HuggingFace download links for every model.

March 2026

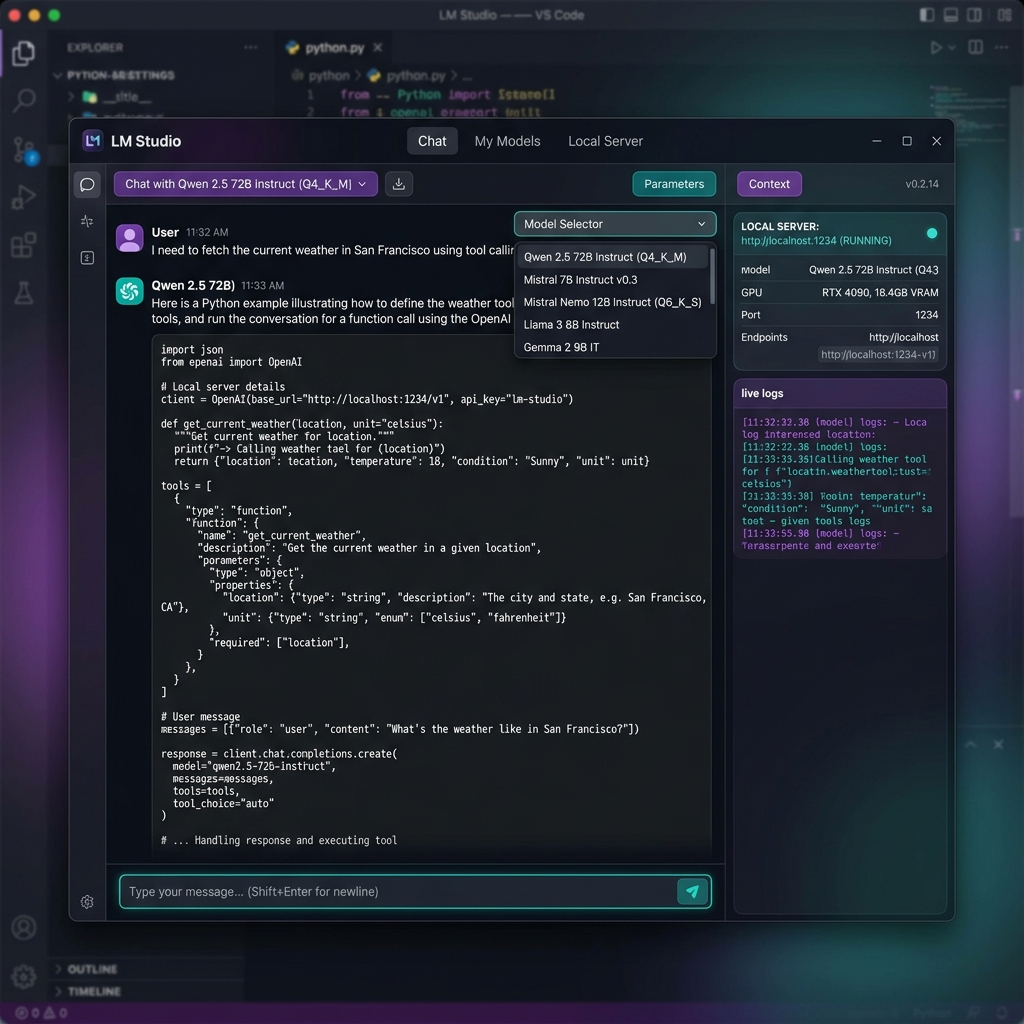

The Essential Guide to LM Studio — Run Local LLMs + Tools

Everything you need to run local LLMs in 2026. Download models, spin up an OpenAI-compatible local server, use tool-calling agents, and connect MCP servers — all 100% offline.

March 2026

Best Local AI Video Generators: A Complete Guide

Learn how to generate fluid, high-fidelity AI video locally in 2026. Explore the best models (LTX-2, SVD, AnimateDiff) and hardware requirements.

March 2026

Best Local AI Image Generators: A Complete Guide

Discover the world of local AI image generators. Learn what they are, the hardware requirements, and how to set up Stable Diffusion locally in 2026.

March 2026

Setting Up Ollama with Open WebUI for a ChatGPT-like Experience

Open WebUI gives your local models a professional, self-hosted frontend. Get a private ChatGPT alternative running in under 10 minutes using Docker and Ollama.

March 2026

How to Run OpenClaw on a Home Server Using Docker and Ollama

Hosting your own AI agent is the ultimate power move for privacy and cost savings. Get the full step-by-step to deploy OpenClaw with Docker on your own hardware.

March 2026

Local vs. Cloud Agents: Breaking Down the $15,000/year API Cost Savings

Businesses running autonomous agents are waking up to the "Token Tax." Discover the math behind how a single local workstation saves you over $15,000 per year.

March 2026

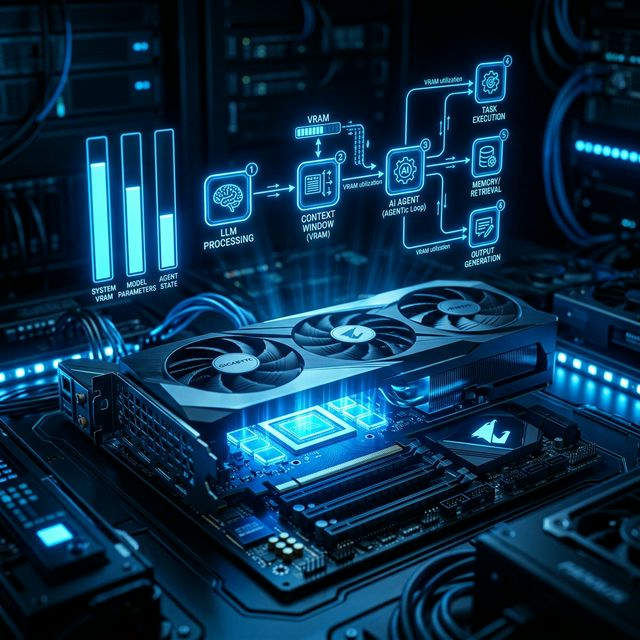

Why AI Agents Need More VRAM: Planning Your Hardware for Multi-Agent Workflows

In the 2026 Agent Era, VRAM is the hard boundary between a system that works and one that crashes. Learn why you need to over-spec your memory.

Hardware Guides & Tutorials

Step-by-step instructions and technical guidance on running local AI effectively.

Host Small Business AI Locally: Replace Monthly Cloud Subscriptions

A comprehensive guide for small businesses to replace expensive cloud AI subscriptions with a single localized mini PC setup running open-source models like Qwen and Llama.

Best Local AI Coding Models of 2026: VRAM Tiers and Benchmarks

The definitive guide to the best local AI coding models in 2026. Ranked by VRAM requirements, hardware needs, benchmarks, and editor setup. Replace GitHub Copilot with privacy.

Dataset Quality: Better Models with Fewer Tokens

Why 1,000 high-quality tokens beat 50k noisy ones for specialized task fine-tuning.

Full Fine-Tuning vs PEFT: The VRAM Reality Check

Do you need an A100 or an RTX 4090? We compare the VRAM cost of all fine-tuning methods.

Unsloth: The 2x AI Training Speedup Tutorial

Unsloth is taking the local AI world by storm. Discover how to reduce VRAM by 70% and double your training speed.

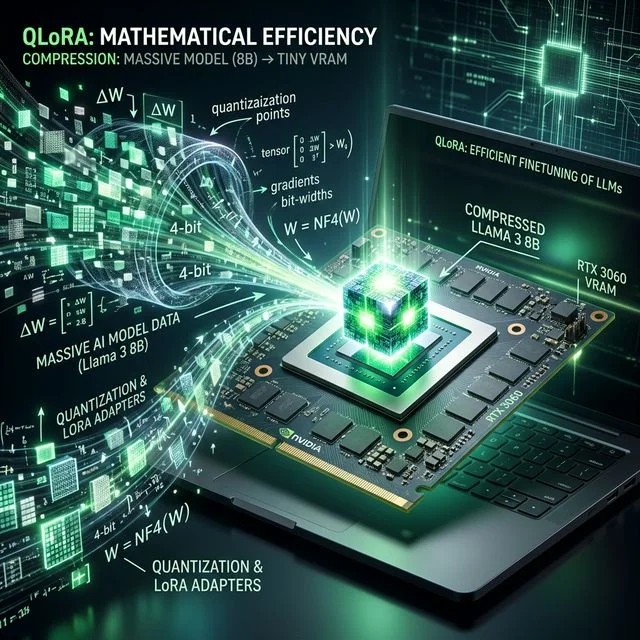

Mastering QLoRA for 8B Models: Efficiency Guide

Learn the exact VRAM requirements and hyperparameter settings for QLoRA fine-tuning on Llama 3 8B.

NVIDIA RTX 5090 Blackwell: The New AI Standard

The RTX 5090 is officially here. We break down its performance for local LLM inference and training.

Fine-tuning 8B Models on a Budget: 16GB is the Key

Learn why the AMD RX 9070 and RTX 5070 Ti are great for entry-level model fine-tuning.

Stable Diffusion XL: Does VRAM Capacity Affect Speed?

In image generation, VRAM affects batch size and resolution. We compare RTX 4090 vs RTX 5080.

Llama 3.3 Hardware Requirements: What You Actually Need

Everything you need to know about running Llama 3.3 locally, from VRAM capacity to system memory overhead.

Best GPU for DeepSeek R1: The Ultimate VRAM Guide

DeepSeek R1 requires massive VRAM for native inference. Learn how quantization, FP8 precision, and CUDA cores impact performance.

RTX 4090 vs RTX 3090 for Local LLMs — Which Should You Buy in 2026?

RTX 4090 vs RTX 3090 for local LLMs: head-to-head benchmarks, VRAM analysis, price comparison, and a clear verdict on which 24GB GPU is worth your money in 2026.

Best GPU for Local AI & LLMs in 2026

The best GPUs for running local LLMs in 2026, ranked by budget. VRAM requirements, tokens/sec benchmarks, model compatibility, and affiliate links for every tier.

How Much VRAM Do You Need to Run LLMs in 2026? The Complete Guide

The definitive VRAM guide for running local LLMs in 2026. Model-by-model VRAM requirements, quantization explained, and GPU recommendations for every budget.

Best Budget GPU for AI in 2026: Under $300, $400, and $500 Picks

The best budget GPUs for running local AI in 2026, organized by price tier. Top picks for under $300, $400, and $500 with real benchmarks, VRAM analysis, and model compatibility.